— AMD unleashes the power of specialized compute for the data center with new AMD EPYC processors for cloud native and technical computing —

— AMD reveals details on next-generation AMD Instinct products for generative AI and highlights AI software ecosystem collaborations with Hugging Face and PyTorch —

Today, at the “Data Center and AI Technology Premiere,” AMD (NASDAQ: AMD) announced the products, strategy and ecosystem partners that will shape the future of computing, highlighting the next phase of data center innovation. AMD was joined on stage with executives from Amazon Web Services (AWS), Citadel, Hugging Face, Meta, Microsoft Azure and PyTorch to showcase the technological partnerships with industry leaders to bring the next generation of high performance CPU and AI accelerator solutions to market.

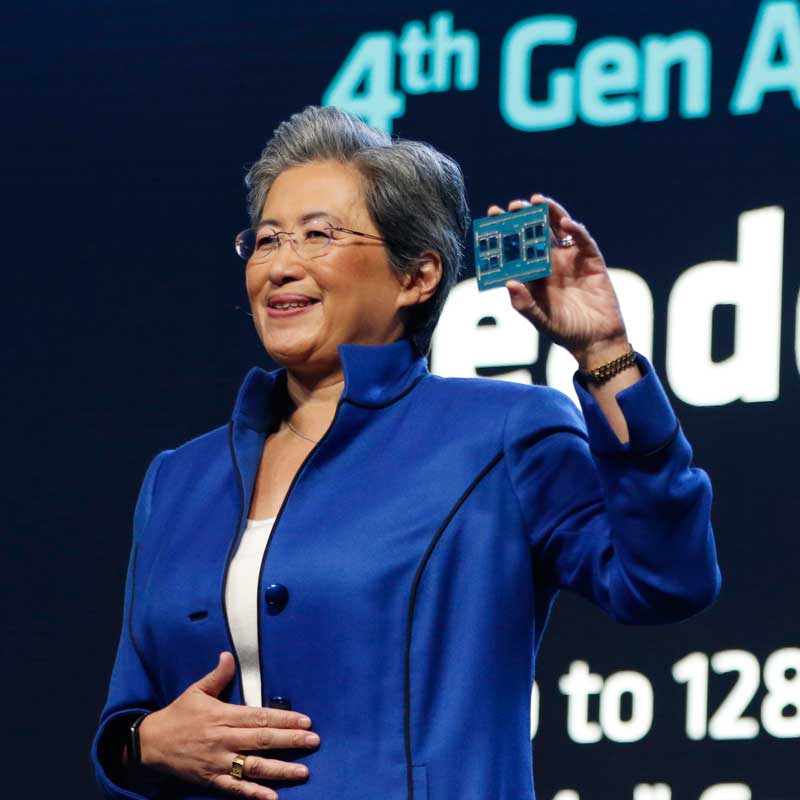

“Today, we took another significant step forward in our data center strategy as we expanded our 4th Gen EPYC processor family with new leadership solutions for cloud and technical computing workloads and announced new public instances and internal deployments with the largest cloud providers,” said AMD Chair and CEO Dr. Lisa Su. “AI is the defining technology shaping the next generation of computing and the largest strategic growth opportunity for AMD. We are laser focused on accelerating the deployment of AMD AI platforms at scale in the data center, led by the launch of our Instinct MI300 accelerators planned for later this year and the growing ecosystem of enterprise-ready AI software optimized for our hardware.”

Compute Infrastructure Optimized for The Modern Data Center

AMD unveiled a series of updates to its 4th Gen EPYC family, designed to offer customers the workload specialization needed to address businesses’ unique needs.

- Advancing the World’s Best Data Center CPU. AMD highlighted how the 4th Gen AMD EPYC processor continues to drive leadership performance and energy efficiency. AMD was joined by AWS to highlight a preview of the next generation Amazon Elastic Compute Cloud (Amazon EC2) M7a instances, powered by 4th Gen AMD EPYC processors (“Genoa”). Outside of the event, Oracle announced plans to make available new Oracle Computing Infrastructure (OCI) E5 instances with 4th Gen AMD EPYC processors.

- No Compromise Cloud Native Computing. AMD introduced the 4th Gen AMD EPYC 97X4 processors, formerly codenamed “Bergamo.” With 128 “Zen 4c” cores per socket, these processors provide the greatest vCPU density and industry leading performance for applications that run in the cloud, and leadership energy efficiency. AMD was joined by Meta who discussed how these processors are well suited for their mainstay applications such as Instagram, WhatsApp and more; how Meta is seeing impressive performance gains with 4th Gen AMD EPYC 97×4 processors compared to 3rd Gen AMD EPYC across various workloads, while offering substantial TCO improvements as well, and how AMD and Meta optimized the EPYC CPUs for Meta’s power-efficiency and compute-density requirements.

- Enabling Better Products With Technical Computing. AMD introduced the 4th Gen AMD EPYC processors with AMD 3D V-Cache technology, the world’s highest performance x86 server CPU for technical computing. Microsoft announced the general availability of Azure HBv4 and HX instances, powered by 4th Gen AMD EPYC processors with AMD 3D V-Cache technology.

See here (https://streak-link.com/Bi5MVFNbG4L-5IFlTQZDTsRf/https%3A%2F%2Fwww.amd.com%2Fen%2Fprocessors%2Fepyc-9004-series) to learn more about the latest 4th Gen AMD EPYC processors and read about what AMD customers have to say, here (https://streak-link.com/Bi5MVFNUVKedkoEecwNdgjT1/https%3A%2F%2Fwww.amd.com%2Fen%2Fsolutions%2Fdata-center%2Fdata-center-ai-premiere%2Findustry-support.html).

AMD AI Platform – The Pervasive AI Vision

Today, AMD unveiled a series of announcements showcasing its AI Platform strategy, giving customers a cloud, to edge, to endpoint portfolio of hardware products, with deep industry software collaboration, to develop scalable and pervasive AI solutions.

- Introducing the World’s Most Advanced Accelerator for Generative AI. AMD revealed new details of the AMD Instinct MI300 Series accelerator family, including the introduction of the AMD Instinct MI300X accelerator, the world’s most advanced accelerator for generative AI. The MI300X is based on the next-gen AMD CDNA 3 accelerator architecture and supports up to 192 GB of HBM3 memory to provide the compute and memory efficiency needed for large language model training and inference for generative AI workloads. With the large memory of AMD Instinct MI300X, customers can now fit large language models such as Falcon-40, a 40B parameter model on a single, MI300X accelerator. AMD also introduced the AMD Instinct Platform, which brings together eight MI300X accelerators into an industry-standard design for the ultimate solution for AI inference and training. The MI300X is sampling to key customers starting in Q3. AMD also announced that the AMD Instinct MI300A, the world’s first APU Accelerator for HPC and AI workloads, is now sampling to customers.

- Bringing an Open, Proven and Ready AI Software Platform to Market. AMD showcased the ROCm software ecosystem for data center accelerators, highlighting the readiness and collaborations with industry leaders to bring together an open AI software ecosystem. PyTorch discussed the work between AMD and the PyTorch Foundation to fully upstream the ROCm software stack, providing immediate “day zero” support for PyTorch 2.0 with ROCm release 5.4.2 on all AMD Instinct accelerators. This integration empowers developers with an extensive array of AI models powered by PyTorch that are compatible and ready to use “out of the box” on AMD accelerators. Hugging Face, the leading open platform for AI builders, announced that it will optimize thousands of Hugging Face models on AMD platforms, from AMD Instinct accelerators to AMD Ryzen and AMD EPYC processors, AMD Radeon GPUs and Versal and Alveo adaptive processors.

A Robust Networking Portfolio for the Cloud and Enterprise

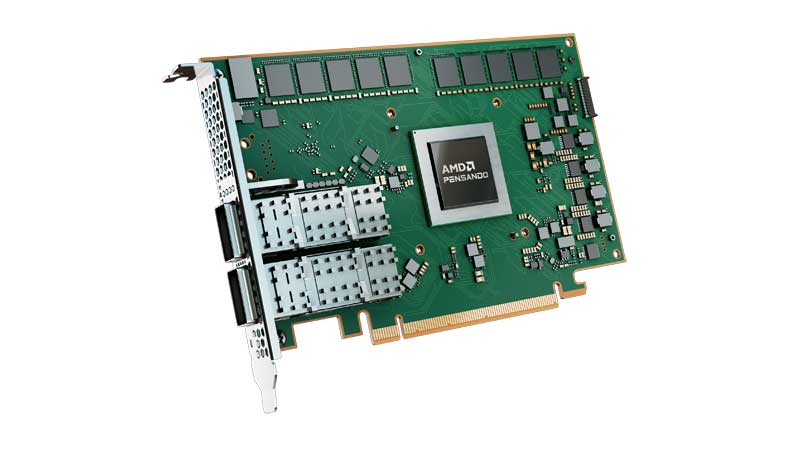

AMD showcased a robust networking portfolio including the AMD Pensando DPU, AMD Ultra Low Latency NICs and AMD Adaptive NICs. Additionally, AMD Pensando DPUs combine a robust software stack with “zero trust security” and leadership programmable packet processor to create the world’s most intelligent and performant DPU. The AMD Pensando DPU is deployed at scale across cloud partners such as IBM Cloud, Microsoft Azure and Oracle Compute Infrastructure. In the enterprise it is deployed in the HPE Aruba CX 10000 Smart Switch, and with customers such as leading IT services company DXC, and as part of VMware vSphere® Distributed Services Engine™, accelerating application performance for customers.

AMD highlighted the next generation of its DPU roadmap, codenamed “Giglio,” which aims to bring enhanced performance and power efficiency to customers, compared to current generation products, when it’s expected to be available by the end of 2023.

AMD also announced the AMD Pensando Software-in-Silicon Developer Kit (SSDK), giving customers the ability to rapidly develop or migrate services to deploy on the AMD Pensando P4 programmable DPU in coordination with the existing rich set of features already implemented on the AMD Pensando platform. The AMD Pensando SSDK enables customers to put the power of the leadership AMD Pensando DPU to work and tailor network virtualization and security features within their infrastructure, in coordination with the existing rich set of features already implemented on the Pensando platform.

Liked this post? Follow SwirlingOverCoffee on Facebook, YouTube, and Instagram.